Nvidia Bets on AI Inference as Chip Revenue Opportunity Hits $1 Trillion

Nvidia, the leading semiconductor company renowned for its graphics processing units (GPUs), is strategically focusing on artificial intelligence (AI) inference as a monumental revenue driver. The firm projects that the market opportunity for AI inference chips could reach an astounding $1 trillion, marking a significant expansion beyond its established dominance in AI training. This shift underscores the growing demand for efficient, real-time AI processing across diverse industries, from autonomous vehicles to cloud computing services.

The Rise of AI Inference in Computing

AI inference refers to the process where trained AI models execute tasks and make predictions based on new data, contrasting with the training phase where models learn from vast datasets. As AI applications proliferate in everyday technology, the need for high-performance inference chips has surged. Nvidia's emphasis on this segment highlights its commitment to capturing value across the entire AI lifecycle, leveraging its expertise in parallel processing to deliver faster and more energy-efficient solutions.

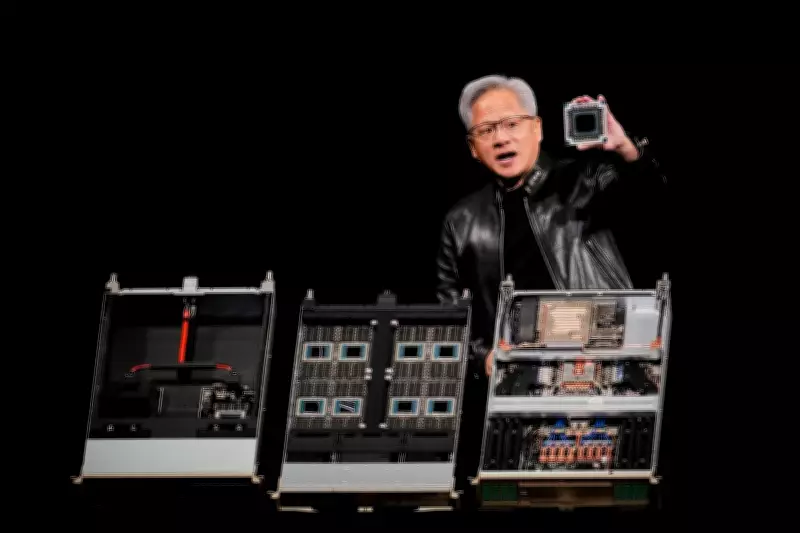

Industry analysts note that the inference market is poised for explosive growth, driven by advancements in machine learning and the integration of AI into consumer devices, healthcare systems, and industrial automation. Nvidia's aggressive investment in inference-specific hardware, such as its Tensor Core GPUs and dedicated inference accelerators, positions the company to capitalize on this trend, potentially reshaping the competitive landscape of the semiconductor industry.

Market Dynamics and Competitive Pressures

The push into AI inference comes amid intensifying competition from rivals like AMD, Intel, and specialized AI chip startups, all vying for a share of the lucrative AI hardware market. Nvidia's early mover advantage in AI training has provided a strong foundation, but the inference space presents unique challenges, including the need for cost-effective and scalable solutions. By targeting inference, Nvidia aims to diversify its revenue streams and reduce reliance on cyclical markets like gaming and cryptocurrency mining.

Financial experts, including Dan Rohinton, a portfolio manager at iA Global Asset Management, have commented on the broader market outlook, suggesting that Nvidia's strategic pivot could influence investor sentiment and stock performance. The company's ability to innovate and adapt to evolving technological demands will be critical in maintaining its leadership position as the AI revolution accelerates.

Implications for Global Technology and Economy

The projected $1 trillion opportunity in AI inference chips reflects the transformative impact of AI on global economies and technological advancement. As businesses and governments increasingly adopt AI for decision-making and operational efficiency, the demand for robust inference capabilities will continue to rise. Nvidia's focus on this area not only promises substantial financial returns but also supports the development of next-generation applications, from smart cities to personalized medicine.

In summary, Nvidia's bet on AI inference represents a forward-looking strategy to harness the full potential of artificial intelligence. With the chip revenue opportunity estimated at $1 trillion, the company is poised to play a pivotal role in shaping the future of computing, driving innovation, and capturing significant market share in an era defined by intelligent automation and data-driven insights.