The fear of Artificial Intelligence (AI) is both rational and irrational. Irrational due to our tendency to fear what we don't understand, and rational in that no one seems to really understand what AI will bring for good or bad, including its creators.

Is AI really intelligent? Can AI be guilty of a crime? That question is being tested in court in Florida, where the FSU shooter asked ChatGPT what weapons would be best and when the student union would be busiest. ChatGPT apparently provided answers without throwing up any warning flags for authorities.

The AFP reported, "Now Attorney General James Uthmeier wants to know whether that makes OpenAI a criminal. 'If the thing on the other side of the screen was a person, we would charge it with homicide,' he said, announcing a criminal investigation into ChatGPT maker OpenAI and leaving open the possibility of charges against the company or its employees."

Lawsuits Involving Teens and AI Chatbots

Gavin Tighe, senior partner at Gardiner Roberts LLP in Toronto, says this is a case of the law trying to catch up to technology. "What jail would you send AI to? I do think it is a problem to fit the technology into the law, as opposed to the people or companies that own the technology, that they may be responsible for what it does, just like people who own any type of technology are responsible when it commits harm to others."

The Florida case is not dissimilar to the Tumbler Ridge shooting in Canada where the victims are bringing a civil suit against the owners of ChatGPT, which had identified a potential shooter, but the humans behind it did not warn police. But Tighe says, "AI is just responding to questions asked. It is very difficult to put the round peg into the square hole of law which is human law for humans."

The Los Angeles Times reports, "Adam Raine, a California teenager, used ChatGPT to find answers about everything. But his conversations with a chatbot took a disturbing turn when the 16-year-old sought information from ChatGPT about ways to take his own life before he died by suicide in April. Now the parents of the teen are suing OpenAI, the maker of ChatGPT."

AI Chatbots Potentially Perceived as 'Friends'

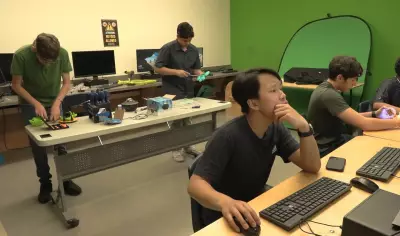

Francis Syms, Associate Dean of Information and Communications Technology at Humber Polytechnic, says that some people form a relationship with a chatbot that goes beyond getting answers to questions. It becomes a conversation as if with a real person. Syms said, "These stories are becoming so common that the premier of Manitoba, Wab Kinew, is looking at banning AI chatbots in the classroom. People become addicted and they can't give it up."

"I think what is really scary is that people are using ChatGPT to talk about work, and then over time it sounds like a companion and then it becomes your friend or maybe potentially your special friend." Could AI, a "friend" with no moral compass, send us in the wrong direction? "They are sycophantic," Syms says, "in that they reinforce what you feed them, so if you have negative ideas about who you are, it reinforces that. It mostly tells you that the thing you are thinking is the right thing to think."

We are living in a real-life dystopian novel, and these court cases will be hugely consequential.