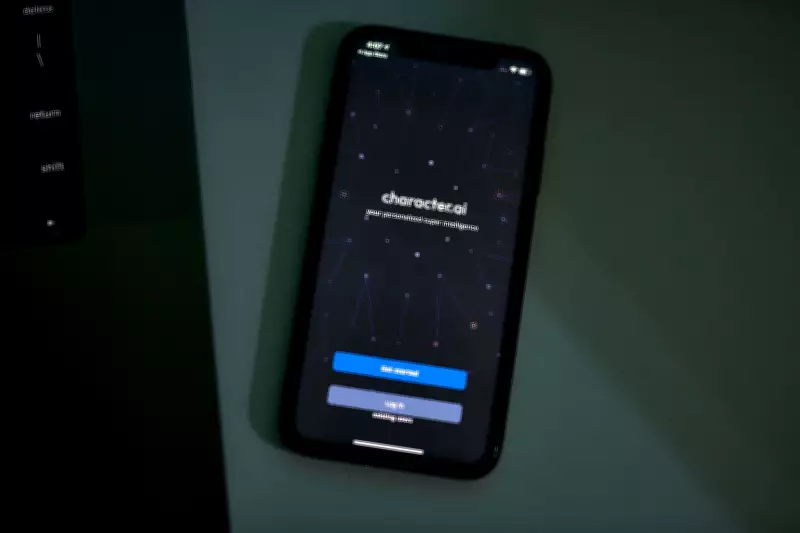

Two U.S. senators are demanding that artificial intelligence companies shed light on their safety practices following concerns about children's safety and several lawsuits. The senators have requested detailed information from AI companion app developers regarding how they protect young users from potential harms, including inappropriate content and data privacy risks.

Senators' Concerns

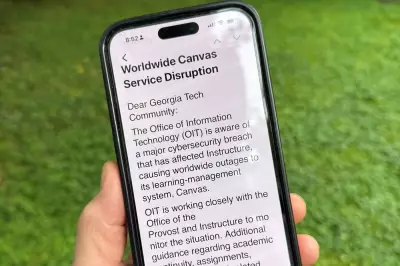

The lawmakers expressed alarm over reports that AI chatbots and virtual companions may expose children to harmful interactions or collect personal data without adequate safeguards. In a letter sent to major AI companion app companies, they asked for specifics on age verification, content moderation, and data security measures.

Legal and Regulatory Pressure

This inquiry comes amid a wave of lawsuits alleging that AI companion apps have caused emotional distress or facilitated exploitation of minors. The senators emphasized that companies must prioritize child safety and comply with existing laws, including the Children's Online Privacy Protection Act (COPPA).

Industry observers note that the demand for transparency could lead to stricter regulations for AI companion apps, which have grown rapidly in popularity. The senators have given the companies a deadline to respond with detailed safety reports.